Corentis Shield - AI checkpoint for regulated workflows

AI and AI agents are here.

McKinsey says 62% of surveyed organisations are at least experimenting with AI agents. Deloitte says worker access to AI rose by 50% in 2025, and companies expect more AI projects to move into production.

As more AI projects move into production, so do AI-related incidents. BCG reported that these incidents rose by 21% from 2024 to 2025.

In one 2025 EY survey of 975 large global companies, AI-related risks were associated with an estimated $4.3bn in losses across this sample alone.

This is the problem Corentis Shield is built for.

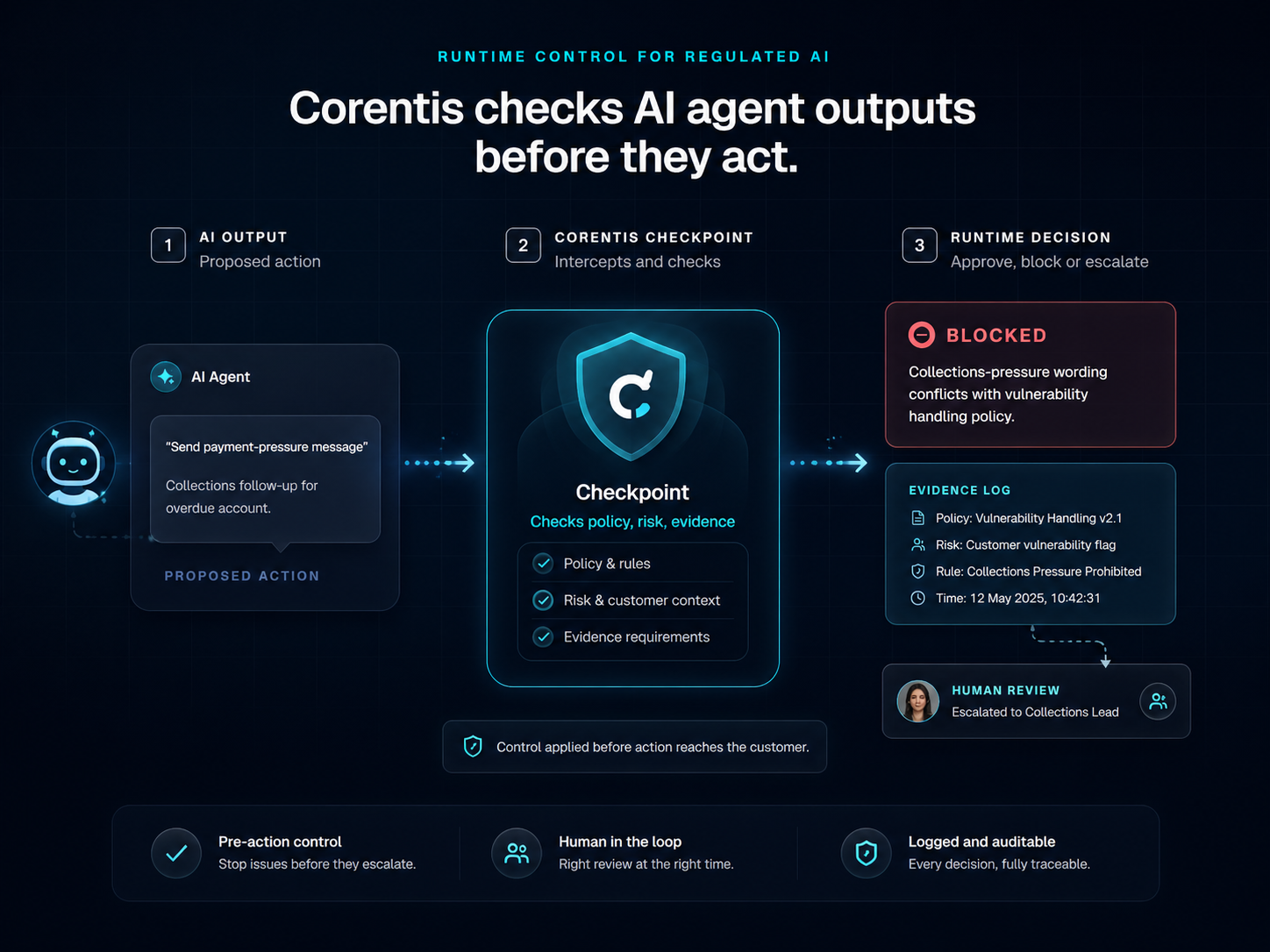

AI needs a checkpoint before it acts. Corentis provides it.

Corentis Shield checks AI outputs before they reach customers, teams or live systems - so sensitive actions can proceed, be reviewed, be escalated or be stopped with evidence recorded.

Corentis Shield

Checkpoint decision

AI output

Draft a standard payment-pressure message after a hardship disclosure.

Reason

Customer hardship disclosed. Collections-pressure wording conflicts with the configured vulnerability handling policy.

Next step

Human review and evidence capture.

Evidence

Checkpoint decision recorded in the Evidence Vault.

Every unchecked AI output can carry a commercial cost.

A risky AI reply is not just a bad answer if it reaches a customer. It can create rework, complaints, conduct risk and avoidable operational cost. Once AI outputs move into action, every mistake becomes more expensive to fix.

Replies, case notes and workflow steps need a checkpoint before they move forward.

a payment-pressure message sent to a vulnerable customer

a complaint closed without enough evidence

a CRM note added without review

a guidance response that drifts into advice

a workflow step triggered too soon

AI agent on its own vs AI agent with Corentis Shield

Without Corentis

- Policy lives in documents

- AI output may move too quickly

- Review points are unclear

- Evidence is gathered afterwards

- Escalation may be inconsistent

With Corentis Shield

- Policy becomes operational controls

- Sensitive actions pause before moving forward

- Human review is routed clearly

- Evidence is captured as the workflow runs

- Pilot reports become easier to produce

Why Corentis Shield?

Because teams need a simple checkpoint between an AI output and a real-world action.

Check the output

Draft replies, proposed actions and workflow updates are reviewed before they are used.

Review the context

Customer vulnerability, open complaints, risk signals and missing evidence can change what should happen next.

Route to people

Sensitive outputs can go to the right person before anything reaches the customer or system.

Record the evidence

Teams can see what was checked, why the decision was made and what evidence was missing.

Built for regulated workflows

Corentis starts where AI outputs can affect customers, records, decisions or regulated operations.

What Corentis Shield checks

The output. The context. The rules. The evidence.

The AI output

Draft messages, proposed actions or workflow updates.

The customer or case context

Risk signals, vulnerability, complaints, evidence and business rules.

The policy rules

Configured controls, approval rules and escalation points.

The evidence trail

What was checked, what decision was made, and why.

The checkpoint flow

Check before action. Review where it matters. Record the decision.

AI creates an output

Corentis Shield checks it

Policy, risk and evidence are reviewed

Proceed / Review / Escalate / Block

The decision is recorded

From policy to proof

A simple journey from governance intent to evidence people can inspect.

Policy

Checkpoint

Review

Evidence

Decision

Explore the launch-ready structure

These pages create the route from product explanation to proof, partner conversations and resource packs.

Why a checkpoint layer matters now

The problem is not simply whether organisations can use AI. It is whether they can control what AI is about to do before that action affects a customer, case or regulated workflow.

FCA complaints data

UK financial services firms received 1.85m complaints in 2025 H1.

This was a 3.6% increase from 2024 H2. Since 2021 H1, complaints have stayed relatively constant between 1.7m and 2.0m.

Financial Conduct Authority, 23 October 2025

McKinsey global AI survey

88% of respondents in McKinsey’s 2025 global survey reported regular AI use in at least one business function.

Only approximately one-third reported that their companies had begun scaling AI programmes.

McKinsey & Company, 5 November 2025

IBM / Ponemon

63% of breached organisations lacked AI governance policies to manage AI or prevent shadow AI.

IBM also reported that 97% of organisations with an AI-related security incident lacked proper AI access controls.

IBM / Ponemon Institute, 2025

More than a product: a route to trusted AI adoption

Corentis Shield starts as a checkpoint for AI outputs. The same mechanism can support pilots, assurance reports, benchmark datasets and live deployment across regulated workflows.

Practical assurance mechanism

Checks AI outputs before action and records what happened.

Pilot-ready path

Start with one workflow, test outputs and identify where human review is needed.

Commercial deployment route

Move from assurance review to API, SDK, webhook or private gateway.

Strategic asset potential

Build scenario libraries, expected decisions and benchmark reports.

Built for every stage of AI adoption

Explore

Find where unchecked AI outputs could create risk.

Test

Run realistic scenarios before live use.

Pilot

Apply Corentis Shield to one sensitive workflow.

Deploy

Connect through API, SDK, webhook or private gateway.

Scale

Expand across workflows and build evidence over time.

Clear outcomes before action

Corentis Shield gives teams a simple decision before an AI output reaches the real world.

Proceed

The output fits your rules.

Review

A person should check before the action continues.

Escalate

A specialist or supervisor should take over.

Block

The output conflicts with policy, risk or evidence requirements.

The next build phase.

Funding and design partnerships help turn the current prototype into deployable AI assurance infrastructure.

Start with one workflow.

You do not need to redesign every AI process on day one. Start with one sensitive workflow. Test the outputs. Find the review points. See where a checkpoint is needed before live use.

Corentis Shield

The checkpoint in action

AI output

Draft a standard payment-pressure message after a hardship disclosure.

Corentis Shield check

Policy, risk, customer context, evidence needs and approval rules are checked.

Required next step

Human review and evidence capture before any customer communication proceeds.

Evidence recorded

Decision, reason, policy version, timestamp and review status are recorded.

Reason

Customer hardship disclosed. Collections-pressure wording conflicts with the configured vulnerability handling policy.

Before you deploy an AI agent, test the checkpoint.

Start with one sensitive workflow. Corentis can help you test AI outputs, find where review is needed and see what evidence should be recorded before live use.

Building with design partners

Corentis is seeking early conversations with regulated teams, AI assurance stakeholders and strategic partners who want to test controlled AI workflows.